SaaS tool guide

dbt Cloud vs Dagster vs Prefect: Data Orchestration 2026

Compare dbt Cloud, Dagster, and Prefect for 2026 data orchestration: transformations, assets, scheduling, observability, deployment, and team fit.

TL;DR

Data orchestration choices in 2026 are less about which scheduler can run a cron job and more about where your team wants the data platform's source of truth to live.

dbt Cloud is the best choice when analytics engineering is the center of gravity. If most of your work is SQL transformations, semantic models, tests, docs, exposures, and governed deployment from Git, dbt Cloud gives the team the most direct path from model change to trusted warehouse table.

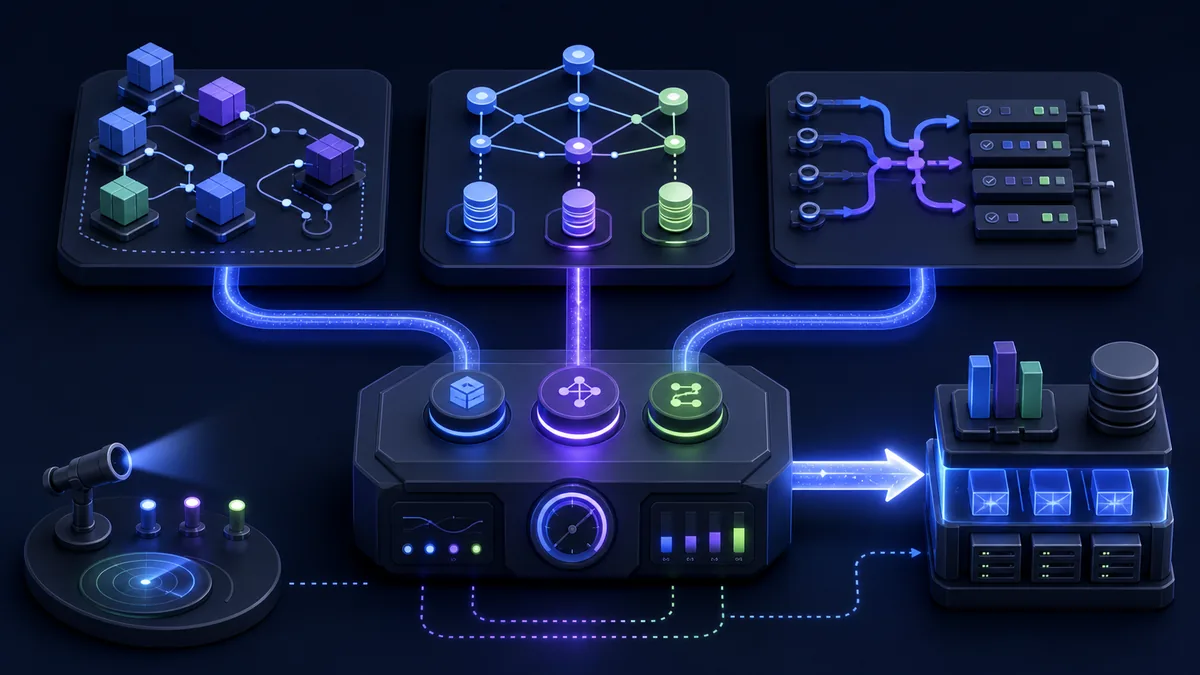

Dagster is the best choice when the platform needs to model data assets across tools. It treats tables, files, ML features, dashboards, and jobs as assets with lineage and metadata. That makes it stronger for engineering-heavy teams coordinating ingestion, transformation, quality, and downstream dependencies.

Prefect is the best choice when workflows are mostly Python automation and operational flexibility matters more than a strict asset graph. It is straightforward to run, easy to adopt incrementally, and comfortable for teams that already think in tasks, flows, retries, and deployment environments.

Quick decision table

| Team situation | Best shortlist | Why |

|---|---|---|

| Your data work is mostly SQL models and dbt tests | dbt Cloud | Native dbt development, scheduling, docs, CI, and deployments are the core workflow. |

| You need asset lineage across ingestion, dbt, ML, and dashboards | Dagster | Software-defined assets make the dependency graph explicit across systems. |

| You need Python-first automation with simple retries and schedules | Prefect | The flow/task model is lightweight and easy to roll into existing Python code. |

| You already have dbt but pipelines are getting hard to reason about | dbt Cloud + Dagster | Let dbt own transformations while Dagster coordinates assets around them. |

| Your team is small and just needs reliable scheduled jobs | Start with dbt Cloud or Prefect | Avoid adopting a platform graph before ownership and failure modes are clear. |

What orchestration has to do now

A useful orchestration layer has to answer six operational questions:

- What assets or jobs are supposed to exist?

- What upstream data does each one depend on?

- What changed since the last successful run?

- Which checks prove the output can be trusted?

- Who owns the failure when something breaks?

- What downstream dashboards, models, or workflows are affected?

Traditional schedulers mostly answered the first question. Modern data teams need all six. The difference between dbt Cloud, Dagster, and Prefect is how much structure each tool imposes to make those answers visible.

dbt Cloud: best for analytics engineering workflows

dbt Cloud is strongest when the warehouse transformation layer is the real platform. It gives analytics engineers a managed surface for developing dbt models, running jobs, reviewing pull requests, publishing docs, monitoring tests, and coordinating deployments.

Choose dbt Cloud when the central question is: "Can we ship trustworthy SQL models faster?" The product is built around that workflow. It understands models, sources, tests, snapshots, exposures, docs, environments, and job runs without glue code. For teams where Snowflake, BigQuery, Databricks, or Postgres models are the main deliverable, that native context matters more than having a general-purpose orchestrator.

The tradeoff is scope. dbt Cloud orchestrates dbt very well, but it is not trying to be the universal control plane for every Python job, ingestion tool, reverse ETL sync, or ML pipeline. Many teams still pair it with another orchestrator when pipelines extend beyond transformation.

Dagster: best for asset-centric data platforms

Dagster's bet is that data platforms should be modeled as assets, not just tasks. A dataset, dbt model, file, notebook output, or ML feature can be represented as a software-defined asset with dependencies, metadata, partitions, checks, and materialization history.

Choose Dagster when lineage and coordination are the hard parts. If ingestion jobs, dbt models, feature pipelines, and dashboards all interact, a task-only graph can hide the real data contract. Dagster makes the asset graph first-class, so engineers can see what exists, what depends on it, and which materializations are stale or failed.

Dagster also fits teams that want orchestration code to feel like product code: typed Python definitions, local development, code review, reusable components, and explicit metadata. The cost is conceptual weight. A small team with five dbt jobs may not need a full asset platform yet. A growing platform team usually appreciates the structure once ownership and dependencies multiply.

Prefect: best for Python-first operational workflows

Prefect is the lightest mental model of the three. You decorate Python functions as tasks and flows, then add scheduling, retries, parameters, logging, and deployment infrastructure. It is especially comfortable for teams that already have Python scripts performing useful work and need to make them reliable without rewriting the whole platform.

Choose Prefect when workflow code changes frequently, jobs mix APIs and Python logic, or the team values operational pragmatism over a centralized asset model. It can orchestrate dbt, ingestion scripts, API syncs, reporting jobs, and data science workflows without forcing every output into a formal asset graph on day one.

The tradeoff is that Prefect's flexibility can become looseness. Without naming conventions, ownership metadata, and data-quality checks, a fleet of flows can become a nicer cron system rather than a trusted data platform. Prefect works best when the team adds its own conventions deliberately.

How they fit with the rest of the data stack

These tools rarely live alone. A realistic 2026 stack often looks like this:

| Layer | Common tools | Orchestration role |

|---|---|---|

| Ingestion | Fivetran, Airbyte, custom API syncs | Trigger, monitor, or model source availability. |

| Transformation | dbt Core / dbt Cloud | Build tested warehouse models and docs. |

| Warehouse | Snowflake, BigQuery, Databricks, Postgres | Store canonical tables and query outputs. |

| Quality | dbt tests, Great Expectations, Soda, warehouse checks | Block or alert on bad data. |

| Catalog / lineage | Atlan, DataHub, OpenMetadata, built-in lineage | Expose ownership and downstream impact. |

| Activation | Hightouch, Census, Reverse ETL jobs | Sync trusted data back into SaaS tools. |

In that architecture, dbt Cloud owns transformation workflows. Dagster can coordinate assets around dbt and expose broader lineage. Prefect can own Python automation and operational jobs. The wrong move is assuming one tool must replace the others. The better move is deciding which layer deserves to be the control plane.

Where teams usually go wrong

The first mistake is adopting a general orchestrator before the team has a clear data contract. If model ownership, source freshness, and test expectations are undefined, moving jobs into Dagster or Prefect will not fix trust. Start by defining what outputs matter and how they fail.

The second mistake is treating dbt Cloud as only a scheduler. Its value is not just timed runs; it is development workflow, environments, tests, docs, CI, lineage context, and deployment discipline for dbt projects. If those features matter, a generic scheduler plus dbt Core may look cheaper but cost more in operational glue.

The third mistake is ignoring incident response. The orchestrator should make failures actionable: which asset failed, what changed, who owns it, and what downstream work is blocked. If alerts only say "job failed," the team will still debug through Slack and warehouse queries.

Recommended patterns

Pattern 1: Analytics-engineering-first team

Use dbt Cloud as the primary workflow. Keep ingestion in managed ELT where possible. Add data observability only after repeated incidents prove the need. This is the simplest path for teams whose core work is reliable warehouse models.

Pattern 2: Platform team with mixed data assets

Use Dagster as the orchestration and asset graph layer, with dbt integrated for transformations. Model ingestion outputs, dbt models, quality checks, and downstream exports as assets. This gives the platform team one place to reason about dependencies.

Pattern 3: Python automation-heavy startup

Use Prefect to productionize existing Python jobs, API syncs, report generation, and lightweight dbt calls. Add stricter naming, ownership, and quality conventions as workflows become business-critical.

Pattern 4: Regulated or high-trust data environment

Favor explicit checks, ownership, lineage, and deployment approvals over whichever tool feels fastest. dbt Cloud plus Dagster is often the stronger long-term architecture when auditability and dependency impact matter.

The practical recommendation

Start from the team's dominant workflow:

- If your team says "model," "test," "exposure," and "semantic layer" all day, start with dbt Cloud.

- If your team says "asset," "lineage," "partition," and "downstream impact," evaluate Dagster.

- If your team says "Python script," "retry," "API sync," and "deployment environment," evaluate Prefect.

For many mid-sized data teams, the end state is not a single winner. dbt Cloud remains the transformation workflow, Dagster coordinates the asset graph, and Prefect either handles isolated automation or never enters the stack. The important decision is sequencing: adopt the smallest orchestration surface that makes current failures easier to understand, then expand only when the dependency graph demands it.

Related guides

Explore this tool

Find dbt-cloudon StackFYI →The SaaS Tool Evaluation Guide (Free PDF)

Feature comparison, pricing breakdown, integration checklist, and migration tips for 50+ SaaS tools across every category. Used by 200+ teams.

Join 200+ SaaS buyers. Unsubscribe in one click.