SaaS tool guide

Data Observability Tools 2026: Monte Carlo vs Bigeye vs Soda vs Metaplane

Compare data observability tools for 2026: Monte Carlo, Bigeye, Soda, and Metaplane across freshness, volume, schema, lineage, alerting, and warehouse cost control.

TL;DR

Data observability tools catch broken pipelines before executives, customers, or downstream teams do. Monte Carlo is the strongest enterprise shortlist when you need broad automated monitoring, lineage context, incident workflows, and stakeholder trust. Bigeye fits teams that want strong metric-level monitoring and anomaly detection across critical tables. Soda is the best fit when you want code-driven data quality checks that can live close to engineering workflows. Metaplane is a practical option for teams that want a focused SaaS data observability layer without buying the heaviest enterprise platform first.

The category overlaps with data testing, cataloging, lineage, and incident management, but it solves a specific problem: detecting when data is late, missing, malformed, duplicated, unexpectedly distributed, or no longer trustworthy. The right choice depends on whether you need an enterprise trust layer, an engineering-first test framework, or a simpler alerting surface for high-value datasets.

Quick decision table

| Team situation | Best shortlist | Why |

|---|---|---|

| You need enterprise-wide data trust and incident workflows | Monte Carlo | Broad automated monitors, lineage context, and executive-friendly trust positioning. |

| You need anomaly detection on key metrics and tables | Bigeye | Strong monitoring model for freshness, volume, distributions, and business-critical signals. |

| You want checks as code inside engineering workflows | Soda | Data quality checks can live closer to repositories, CI, and pipeline ownership. |

| You want a focused SaaS data observability rollout | Metaplane | Useful for teams that need monitoring quickly without the heaviest governance program. |

| You have only a few fragile pipelines | Start with dbt tests plus alerts | A full observability platform may be premature before failure patterns are clear. |

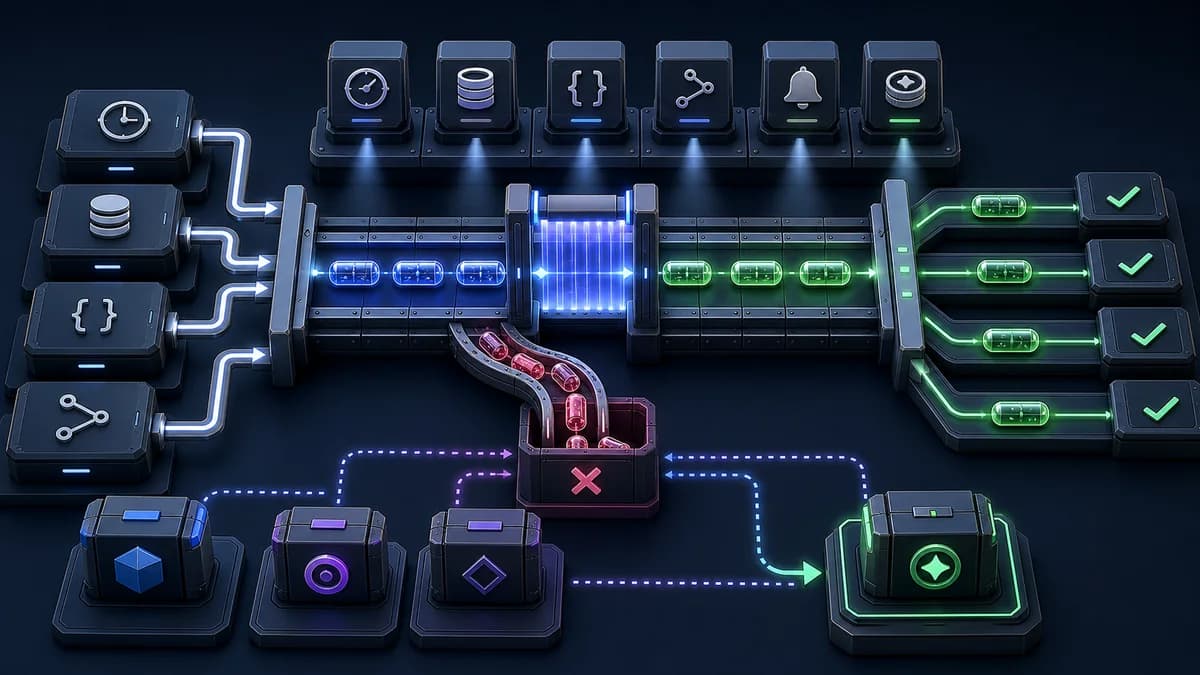

What data observability should monitor

A useful data observability program usually starts with these signals:

- Freshness: did the dataset update when expected?

- Volume: did row counts spike, drop, or flatline?

- Schema: did columns, types, or contracts change unexpectedly?

- Distribution: did important values drift outside normal ranges?

- Nulls and uniqueness: did key fields become incomplete or duplicated?

- Lineage impact: which dashboards, models, customers, or workflows are affected?

- Ownership: who receives the alert and who can fix the upstream cause?

The tool is only half the system. You also need runbooks, severity levels, owners, alert routing, and a way to close the loop after incidents.

Monte Carlo: best enterprise data observability platform

Monte Carlo is the default enterprise shortlist for teams treating data quality as a reliability discipline. It is strongest when the organization needs automated monitoring across warehouses, pipelines, BI assets, and data products, plus lineage and workflow context for incidents.

Choose Monte Carlo when broken data creates executive escalations, customer-facing issues, or regulatory risk. Its value increases when many teams consume shared data and nobody can afford to debug quality problems manually through warehouse queries and Slack threads.

The tradeoff is cost and rollout weight. Monte Carlo can be more platform than a small team needs. Before committing, validate alert precision, warehouse query cost, lineage coverage, and whether the organization will actually staff data incident ownership.

Bigeye: best for metric and table monitoring depth

Bigeye is a strong fit for teams that want deep monitoring around tables, metrics, and anomaly detection. It can help data teams watch high-value assets for freshness, volume, distribution changes, and data-quality regressions.

Choose Bigeye when your pain is not just missing tests but noisy, hard-to-diagnose data drift. It is useful when business metrics depend on several upstream tables and a subtle distribution shift can be as damaging as a failed pipeline.

As with any observability tool, alert quality matters more than dashboard volume. Test Bigeye against your actual noisy datasets and confirm the team can tune alerts down to useful signals.

Soda: best for checks-as-code data quality

Soda is a better fit when data quality needs to behave like software quality. Teams can define checks, run them near pipelines, and integrate quality gates into development and CI workflows.

Choose Soda when engineers and analytics engineers want explicit assertions around datasets: accepted ranges, missing values, schema expectations, uniqueness, referential integrity, or freshness rules. It is especially useful when data quality ownership lives close to dbt, orchestration, or repository workflows.

The tradeoff is that checks-as-code requires discipline. You need people to write and maintain checks. Automated anomaly detection may catch unknown unknowns; explicit tests catch known expectations. Many teams eventually need both.

Metaplane: best focused SaaS rollout

Metaplane is a pragmatic option for teams that want SaaS data observability without starting with the largest enterprise program. It focuses on monitoring pipelines and warehouse assets so teams can detect incidents earlier and route them to owners.

Choose Metaplane when your team has real data reliability pain but still wants a lightweight operational rollout. It can be a good middle path for analytics engineering teams that need freshness, volume, schema, and anomaly alerts before they invest in a broader governance platform.

Validate connector coverage, alert routing, lineage depth, and pricing against your actual warehouse footprint. A focused product is only useful if it watches the assets that really break.

Evaluation workflow

- Pick ten critical data assets: executive metrics, revenue tables, product events, customer-facing exports, and ML features if relevant.

- List recent failures for those assets and classify them by freshness, volume, schema, distribution, nulls, duplicates, or downstream impact.

- Test each tool on those failure modes instead of generic demo datasets.

- Measure alert noise for at least two normal pipeline cycles.

- Confirm lineage can show downstream dashboards, models, and owners.

- Estimate warehouse query cost from monitoring.

- Write the incident workflow: who is paged, who investigates, who informs stakeholders, and how fixes are documented.

Common mistakes

The first mistake is enabling too many alerts. If every warning routes to Slack, people will mute the channel. Start with critical assets, tune thresholds, and expand only when the signal is useful.

The second mistake is ignoring warehouse cost. Observability queries can become meaningful spend at scale. Ask each vendor how monitoring frequency, table size, profiling depth, and retention affect warehouse usage.

The third mistake is separating observability from ownership. Alerts without owners are just dashboards with anxiety. Every monitored asset should have a responsible team and a runbook.

Verdict

Pick Monte Carlo for enterprise data reliability and broad trust workflows. Pick Bigeye for strong anomaly monitoring around important tables and metrics. Pick Soda for engineering-owned checks-as-code. Pick Metaplane for a focused SaaS rollout that can cover core freshness, volume, schema, and anomaly issues without starting too heavy.

The best rollout is small and operationally real. Monitor the datasets that already cause incidents, prove the alerts are actionable, then expand coverage once the team trusts the signal.

FAQ

Is data observability the same as data testing?

No. Data testing usually encodes expected rules. Data observability adds monitoring, anomaly detection, lineage context, alerting, and incident workflows for data assets in production.

Should data observability replace dbt tests?

Usually no. dbt tests are still valuable for explicit contracts. Observability tools complement them by watching freshness, volume, schema changes, distribution drift, lineage impact, and operational incidents.

When is a data observability platform worth it?

It is worth it when broken data repeatedly creates expensive debugging, executive confusion, customer impact, or missed decisions. If issues are rare and localized, start with simpler tests and alerts first.

Explore this tool

Find monte-carloon StackFYI →The SaaS Tool Evaluation Guide (Free PDF)

Feature comparison, pricing breakdown, integration checklist, and migration tips for 50+ SaaS tools across every category. Used by 200+ teams.

Join 200+ SaaS buyers. Unsubscribe in one click.